High Performance Visualization

headed by Dr. Steffen Frey

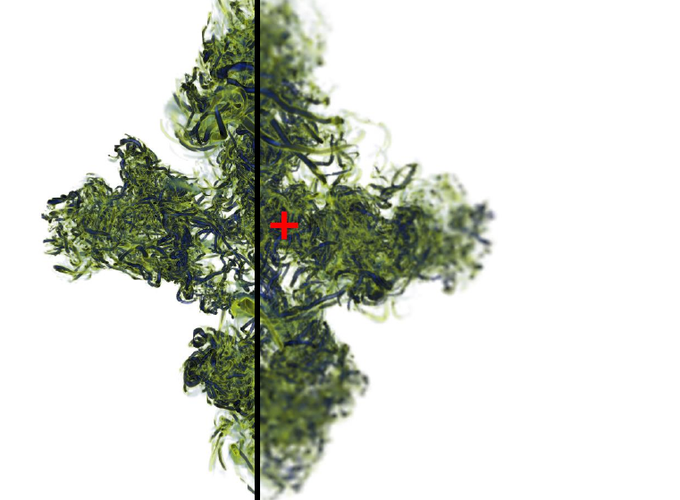

Parallel and distributed computing enable the investigation of large data sets at interactive rates. A major challenge is that the typical visualization workload heavily depends both on the data as well as different (types of) parameter settings. It can rapidly change during interactive user exploration, potentially resulting in low responsiveness among others. This can be addressed by dynamic adjustments, e.g., reassigning work across devices to balance load or adapting quality parameters (like sampling density) to meet framerate targets during rendering. Orthogonal to this are approaches reducing computational cost by explicitly considering characteristics of the human visual system (e.g., foveated rendering accounts for the fact that more detail can be observed in the center of the field of view).

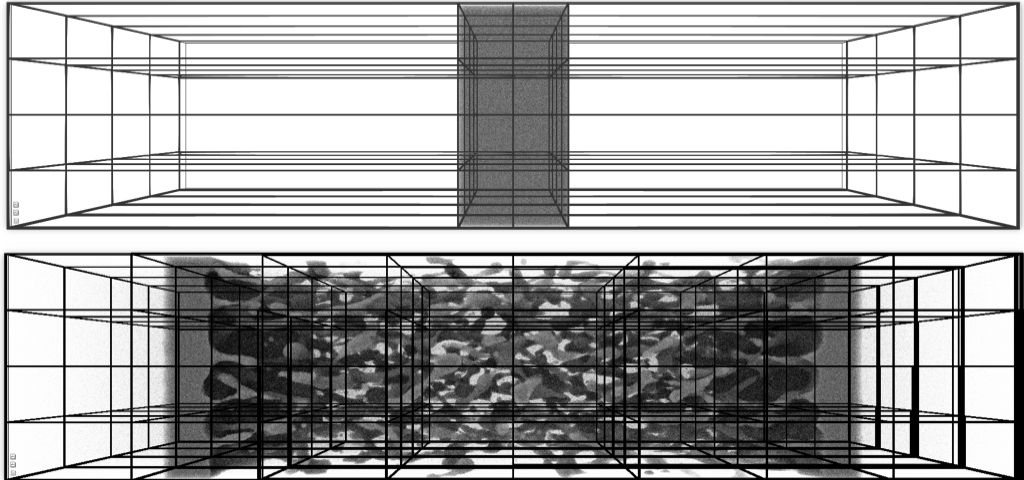

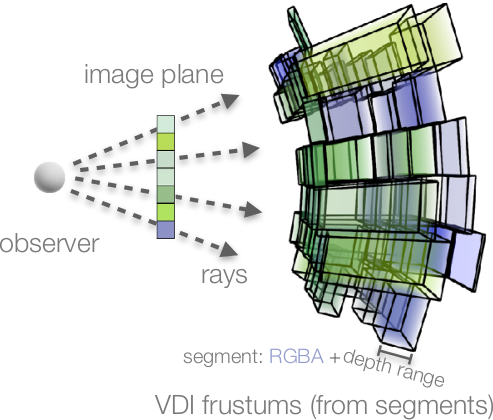

In situ visualization tackles the problem that data is generated at a higher rate than it can be stored in some scenarios, like in large-scale simulations on supercomputers. This problem has increasingly gained significance in recent years due to the widening gap between compute performance and storage/network capabilities. In situ visualization addresses this by processing data while it is generated. This involves various different challenges, including finding an efficient data representation as well as designing and steering visualization approaches to run efficiently alongside a simulation.